Much of the focus this AI Tech Wave has been on the three mega-AI IPOs expected this year with a combined valuation of over three trilllion dollars. But it’s not just SpaceXai, Anthropic and OpenAI who are poised to go public.

This week the US markets are focused on Cerebras Systems, a next generation AI inference chip startup that is likely to raise its expected valuation range to $35 billion and raise almost $5 billion. I discussed the current boom in chip stocks in particular this week in AI-RTZ #1083, with the chip stocks “adding $3.8 trillion in market cap in just six weeks”.

As the Information explains in “Cerebras IPO Will Test Investor Appetite for AI Chip Startups”:

“How many AI chip designers can the public market support? We’ll get a sense of that on Thursday when Cerebras Systems, the biggest of a new generation of chip designers dedicated to AI, is expected to go public at a valuation of $35 billion. If it follows the pattern of other AI-related IPOs, such as cloud firm CoreWeave, Cerebras will be a big hit.”

“CoreWeave went public a year ago at $40 a share and, despite a lot of ups and downs, it closed on Friday at $114. (Most other tech IPOs from last year, that had no AI exposure, have had a very different experience.) CoreWeave has been well received even though it is burning through billions of dollars in cash right now as it ramps up its network of data centers. Investors’ favorable reaction to it suggests Wall Street will look past the fact that Cerebras is also burning cash and focus on its AI exposure. (Demand for stock in the IPO is also strong, Bloomberg reported on Friday).”

The public markets for now are leaning into the chip opportunity, something I discussed yesterday:

“Indeed, we are at a moment of peak AI infrastructure optimism, with shortages of computing capacity apparent constantly (hence Anthropic’s deal with SpaceX this past week). Of course, the capacity shortfall is an argument for CoreWeave, whose customers include Anthropic, Microsoft, OpenAI and Meta Platforms, but not necessarily for Cerebras. Is there the same kind of demand for another AI chip supplier?”

“Buyers of chips—mostly cloud firms and big AI developers—have plenty of choice already. Aside from Nvidia, which dominates the field, there are AI chips from Advanced Micro Devices, Google and Amazon. Meta and Microsoft are developing their own chips. Chinese firms have Huawei and many others. So what’s Cerebras’ pitch?”

“Cerebras does have OpenAI as a customer (and shareholder), even though OpenAI is also developing its own chip in concert with Broadcom. Cerebras also struck a deal to supply its chip to Amazon Web Services as a supplement to Amazon’s Trainium AI chip. But there’s no getting around the fact that the chip market is crowded. That’s perhaps why some other AI chip startups have effectively sold themselves: Groq licensed its tech to Nvidia, which hired the startup’s top people, for instance. And Meta hired a key group of engineers from another chip startup, Graphcore.”

Stratechery does a good job explaining Cerebras’ technical innovations in AI chips vs Nvidia and others in “The Inference Shift”, summarized in this bit here:

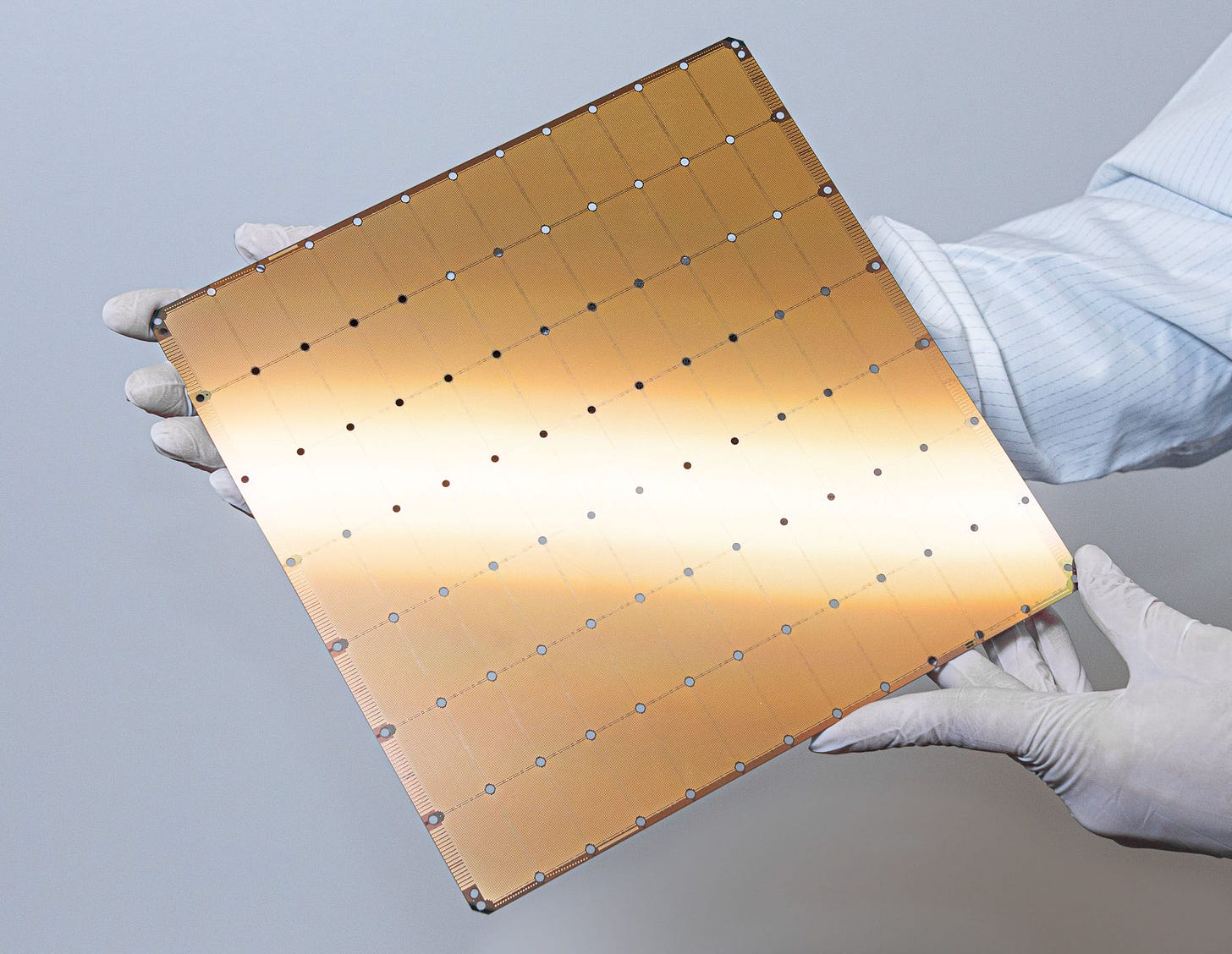

“Cerebras makes something completely different. Cerebras, has invented a way to lay down wiring across the so-called “scribe lines” that are the boundary between reticle exposures, making the entire wafer into a single chip with no need for relatively slow chip-to-chip linkages.”

“The net result is a chip with a lot of compute and a lot of SRAM that is blisteringly fast to access. To put it in numbers, the WSE-3 (Cerebras’ latest chip) has 44GB of on-chip SRAM at 21 PB/s of bandwidth; an [Nvidia] H100 has 80GB of HBM at 3.35 TB/s. In other words, the WSE-3 has just over half the memory of an H100, but 6,000 times the memory bandwidth.”

“The reason to compare the [Cerebras] WSE-3 to an [Nvidia] H100 is that the H100 is the chip most used for inference — and inference is clearly what Cerebras is most well-suited for. You can use Cerebras chips for training, but the chip-to-chip networking story isn’t very compelling, which is to say that all of that compute and on-chip memory is mostly just sitting around; what is much more interesting is the idea of getting a stream of tokens at dramatically faster speed than you can from a GPU.”

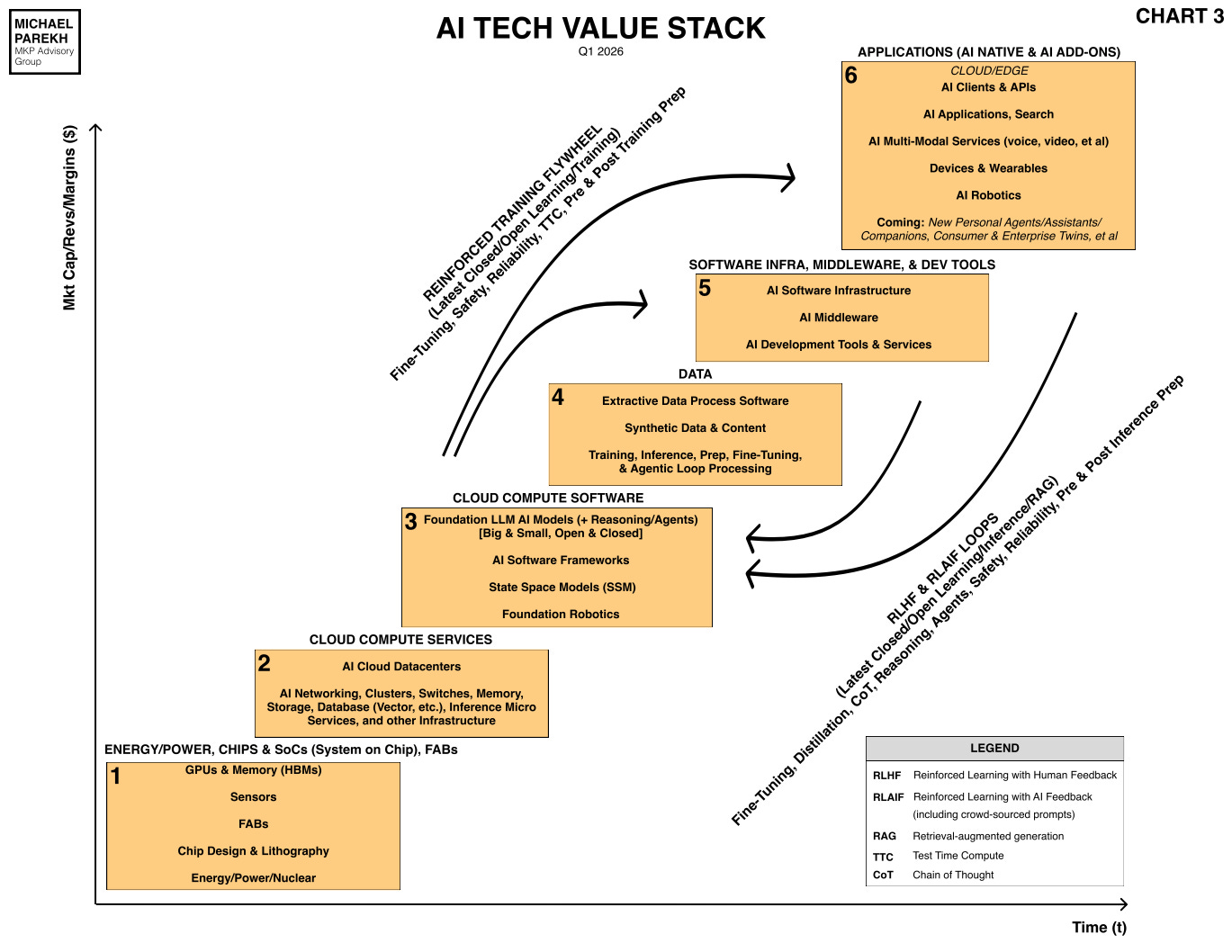

I go into these technical details to underline how the AI innovations not just in chips, but across the AI Tech stack from the chip layer in Boxes 1 and 2 below, have a flood of iterative and at times exponential improvements ahead.

The private markets thus far, and now the public markets soon, are poised to reward some of these AI efforts across the AI Tech Stack. Both here and overseas, especially China.

But the opportunity sets here are not zero sum. And there’s a ton of ‘TAM’ (total addressable market’ to be created up and down the tech stack soon.

The mega-AI IPOs, Cerebras et al, are just the appetizers this year. Stay tuned.

(NOTE: The discussions here are for information purposes only, and not meant as investment advice at any time. Thanks for joining us here)

Source link