Recently, Anthropic introduced Claude Mythos Preview, a general-purpose model with advanced coding and agentic capabilities, to select organisations as part of a new initiative called Project Glasswing. The model has the ability to autonomously find thousands of high-severity, zero-day vulnerabilities in major operating systems and web browsers. Obviously, the waves were felt in the cybersecurity space for better and for worse.

However, unlike the SaaSPocalypse, Claude Mythos is not going to replace cybersecurity professionals. While that is some good news in a world that is scared of AI replacing them or making their roles redundant, the ugly side is that the very tech will also amplify the scale of cyberattacks.

This makes us ponder: how has AI increased security risks? And are these risks only restricted to models, or do they pose a more severe threat? If yes, how are we planning to deal with it? Let’s find out in this edition of The AI Shift.

The Silent Evolution Of Cyber Threats

For years, cybersecurity evolved alongside infrastructure shifts — from web applications to cloud. With AI, that progression has taken a more complex turn.

Unlike earlier layers, AI is not just an interface sitting atop systems. It is deeply embedded in enterprise workflows, decision-making engines, and data pipelines.

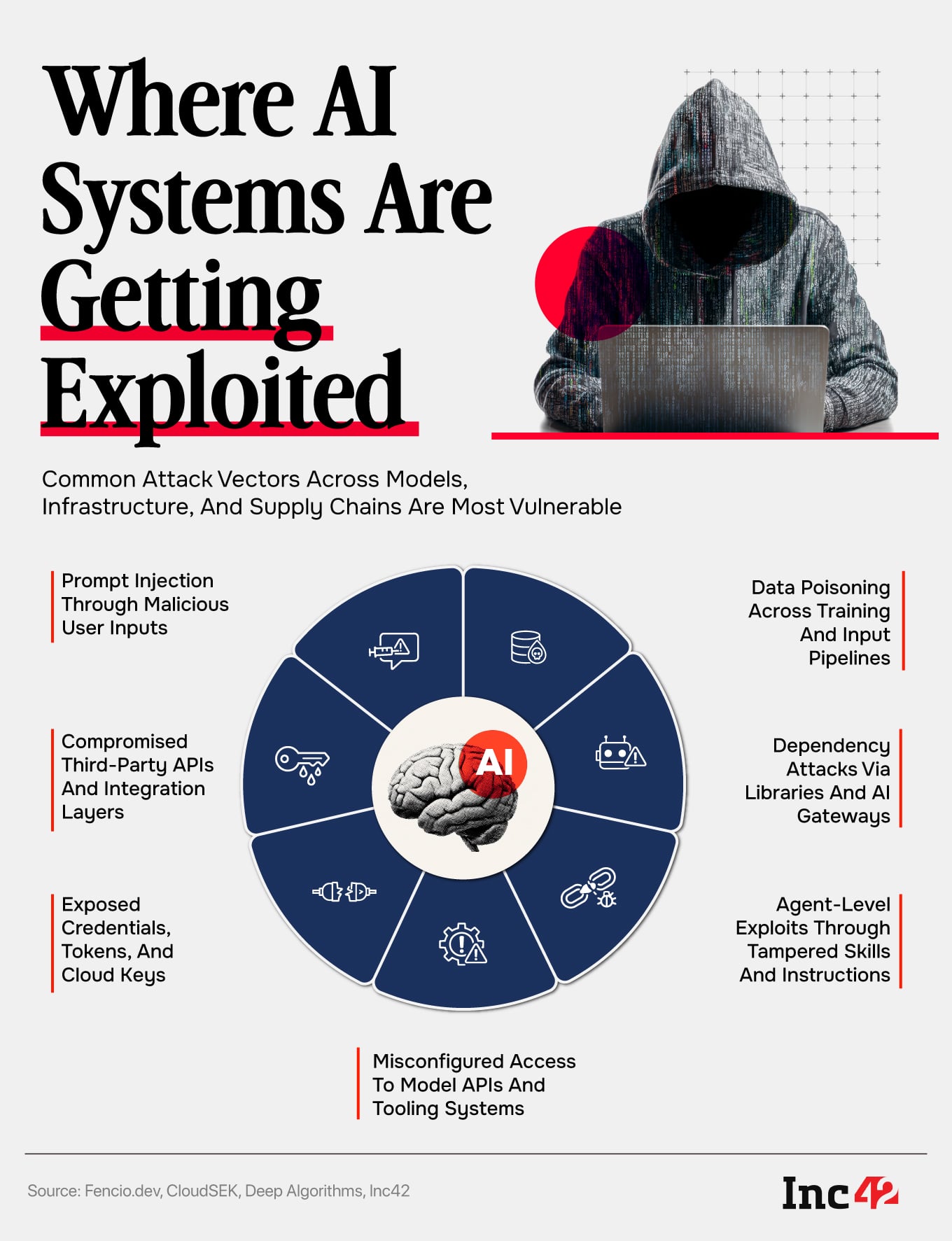

At the application layer, attacks such as prompt injection are already emerging, where malicious instructions are embedded into inputs to manipulate model behaviour.

But as JP Mishra, founder and CEO of Deep Algorithms, an Indian cybersecurity company that primarily serves the BFSI sector, pointed out, the bigger concern is how invisible these attacks are.

Unlike traditional exploits, these attacks don’t look malicious. They resemble normal text, documents, or queries, making it difficult for both humans and systems to distinguish intent.

“The AI might treat something that looks harmless to us as a command,” he said.

Alongside this, risks like data poisoning are quietly emerging, where attackers manipulate training or input data over time to skew outputs.

But the bigger shift is happening beneath that layer.

According to Rahul Sasi, the cofounder and CEO of cybersecurity company CloudSEK, the AI infrastructure, including model pipelines, APIs and orchestration systems, is becoming a viable target. Misconfigured access to tools like Google Gemini or poorly secured integrations can expose sensitive enterprise data.

Then there is the architectural layer where AI agents operate through skills or instruction sets. If these are tampered with, the system can execute malicious actions without triggering traditional security alerts.

Unlike malware, these attacks do not rely on files or binaries, making them harder to detect using conventional tools, reaffirming Mishra’s point above.

Supply Chains: The New Weak Link

Attackers are not always going after the AI directly. They are going after what the AI depends on. This includes third-party libraries, APIs, data sources, and orchestration tools that power AI systems.

Aashish Bharadwaj, the cofounder of Fencio.dev, a security-focused startup for AI agents, shared a recent example of a LiteLLM breach, where attackers compromised a dependency library used by an AI gateway.

“The system itself remained untouched, but the breach propagated through its dependencies, exposing sensitive information downstream,” he said.

What makes these attacks harder to detect is that AI systems trust their inputs and integrations by default. If an agent is connected to a compromised API or tool, it will continue interacting with it — unknowingly ingesting or propagating malicious data.

In another example, OpenAI disclosed a supply chain incident involving a compromised Axios dependency in its macOS signing workflow. While no breach was reported, the episode highlighted how indirect dependencies can quietly become high-impact attack vectors in modern AI pipelines.

Sasi of CloudSEK added that exposed credentials, publicly accessible dashboards and plaintext cloud keys are emerging as common vulnerabilities in the AI infrastructure world.

However, what’s even scarier is that the challenge extends beyond individual systems in the fintech space. With increasing reliance on SaaS integrations and third-party vendors, supply chain risks have grown significantly in the sector.

A Speed Mismatch In Cybersecurity

Beyond new attack surfaces, AI is fundamentally changing the speed and scale of cyber threats. AI is making threats faster, more personalised, and easier to scale.

Attackers can now continuously scan systems, identify vulnerabilities, and exploit them without waiting for manual triggers. As Mishra of Deep Algorithms put it, this has turned cyberattacks into an always-on process.

To counter this, some companies are moving towards continuous threat exposure management (CTEM) models, where systems are constantly stress-tested and not periodically audited.

Neeraj Chauhan, the global CISO of PayU, frames this as a growing asymmetry in the fintech space. While AI is improving fraud detection, it is also enabling attackers to evolve faster, from basic fraud to highly sophisticated, real-time threats.

He highlighted three emerging gaps: AI-generated deepfakes bypassing KYC systems, AI systems themselves becoming attack surfaces, and attack timelines shrinking from weeks to hours — often faster than human teams can respond.

“Most enterprises are still defending against a human-speed adversary that no longer exists,” says Shayak Mazumder of Adya.ai, a unified dev platform for enterprises, adding that the gap between offensive and defensive capabilities is narrowing — but not in favour of defenders.

In India, this challenge is compounded by preparedness gaps. Many organisations are still in the early stages of AI adoption and lack a clear understanding of how these systems can be exploited.

Sectors like BFSI are relatively ahead due to regulatory pressure, but even there, the rapid expansion of digital infrastructure, from UPI to AI-driven services, has significantly widened the attack surface.

Rewriting The Cybersecurity Playbook

One of the most widely adopted approaches to AI security today is the use of guardrails, which are predefined rules to restrict model behaviour. But experts caution that this approach has limitations.

Guardrails work much like blacklists in traditional cybersecurity. They attempt to block known bad behaviours. However, attackers can often bypass them through alternative phrasing or contextual manipulation.

Instead, enterprises are beginning to shift towards more structural approaches:

- Least Privilege Access: Limiting what an AI system can see and do, ensuring that even if compromised, its impact is contained.

- Continuous Visibility: Understanding how attack paths evolve, including how AI systems themselves might identify vulnerabilities.

- Resilience-First Design: Assuming breaches will occur and building systems that can detect, respond and recover quickly.

FYERS, an AI-first online trading platform, combines strong authentication, role-based access controls and real-time behavioural monitoring with ML-driven detection systems to surface anomalies early, as explained by its cofounder Yashas Khoday. Similarly, Deep Algorithms’ systems continuously test themselves rather than relying on periodic checks.

For PayU, this shift is reflected in tools like Ezer, an internal security system designed to move from reactive defence to anticipatory response. The platform continuously maps emerging AI threats to infrastructure and the vendor ecosystem, helping prioritise risks before they escalate.

AI is reshaping cybersecurity in ways that are both powerful and unpredictable. As attack surfaces expand across systems, supply chains, and infrastructure, and threats become faster and harder to detect, organisations must rethink ways to become hypervigilant.

Top Stories From India & Around The World

- Caution Envelops AI Investors: Nearly 48% of investors see AI and robotics as the most investment-ready segment, but only 7% are willing to pay premium valuations. Over 56% believe India’s AI ecosystem is still 3-5 years behind China, with caution rising due to model commoditisation and weak differentiation.

- Exotel Acquihires Dubverse: Exotel has brought in Dubverse’s founding team to strengthen its AI-led CX stack, adding capabilities in multilingual voice AI and conversation analytics for enterprise use cases.

- Era Of AI Washing: Workforce cuts at companies like Oracle are being driven by capital shifts toward AI infrastructure rather than direct job replacement, with experts calling out cost optimisation and “AI washing” as key factors.

- Meta Launches Muse Spark: Meta unveiled Muse Spark, a multimodal model powering its AI assistant with capabilities like parallel agents, visual understanding, and coding. It will roll out across WhatsApp, Instagram, Facebook, and AI glasses.

- TraqCheck Bags $8 Mn: The enterprise tech startup has raised about ₹74.6 Cr in its Series A funding round led by IvyCap Ventures to scale its existing real-time conversational AI agent. Founded in 2020, TraqCheck is an AI-driven platform that helps enterprises conduct background checks of employees.

- Perplexity Hits $500 Mn Revenue Mark: Perplexity AI has scaled its revenue from $100 Mn to $500 Mn while growing team size by just 34%, CEO Aravind Srinivas said on X, giving the credit to its computer pivot. The startup expects to double revenue again in 2026, as it doubles down on building AI-powered tools for founders and small businesses.

The Weekly Buzz: How An Engineer Cracked Google’s AI Watermark

Google DeepMind built SynthID to watermark every piece of content generated by Gemini, silently, invisibly, and at massive scale.

Billion images, videos, and text outputs carry this hidden fingerprint. It lives at the pixel level, designed to survive compression, cropping, even screenshots. The assumption: it’s unbreakable.

Then one independent engineer flipped the script, literally.

Instead of attacking complex images, he generated pure black and white outputs from Gemini. In these edge cases, there’s no content to mask the watermark — only the signal remains.

Using FFT spectral analysis, he mapped the watermark’s structure. From there, he built two things: a detector and a bypass that removes traces of the watermark.

No model access. No leaks. Just signal processing.

The bigger takeaway is that even the most “robust” safeguards in AI can be probed and broken.

As watermarking becomes central to AI authenticity debates, this raises a deeper concern. If detection systems can be reverse-engineered this easily, what does trust in AI-generated content actually look like going forward?

Startup In The Spotlight: Powerplay

Construction projects often rely on scattered workflows, with data spread across registers, Excel sheets, and WhatsApp groups. This makes planning, procurement, and tracking slow and manual, especially for mid-sized contractors who lack access to advanced tools.

Founded in 2020 by Shubham Goyal, Iesh Dixit, and Manish Prasad, Powerplay is building an AI-led platform to simplify project execution. It started by fixing data capture, offering a mobile-first system that replaces informal communication with structured, trackable inputs, while also integrating with tools like SAP.

On top of this, Powerplay has introduced AI agents to automate early stage project work. By analysing drawings, the platform can generate bills of quantities, material needs, and project schedules with around 85-90% accuracy. These agents use a mix of general AI models and construction-specific data.

Unlike traditional tools or service providers, Powerplay operates as both a SaaS and transaction-driven platform. It not only enables planning but also optimises procurement through a vendor network, capturing value across the lifecycle.

By focusing on mid-sized contractors, Powerplay is aiming to replace manual processes and become a single system for managing construction projects.

How A VC Runs Their Workflow On AI

For Elevation Capital principal Poorvi Vijay, AI isn’t just a theme to invest in, it’s how the job gets done.

A typical VC day is chaos. “I probably do 10 meetings a day,” she said, across founders, sectors, and shifting contexts. The challenge isn’t just volume, it’s staying sharp across everything — market signals, inbox overload, founder context, and follow-ups.

Her solution is a tightly integrated AI workflow.

Every morning starts with a unified AI-generated briefing pulling from Slack, Gmail, calendar, and notes. It tells her what needs attention, what’s urgent, who she’s meeting, and the context behind each interaction.

“It gives me a complete run of my day… what I need to focus on, what I need to respond to,” she explained.

Under the hood, this runs on internal tooling, custom MCP servers, connectors, and a dedicated AI adoption team stitching everything together.

The outcome isn’t just productivity, it’s decision quality.

In a world where something changes every day, AI has become the layer that helps VCs keep up with both speed and signals.

[Edited by: Shishir Parasher]

[Creatives by: Varshita Srivastava]