The internet has always relied on the assumption that the person on the other side of the screen is real.

But that assumption is starting to crack. AI tools can now create convincing faces, clone voices, and produce synthetic identities at a scale that security systems were never built to handle. The question of who is real online is becoming harder to answer.

A Miami-based startup believes the answer may be sitting in plain sight, literally in the palm of your hand.

VeryAI, a company focused on identity verification in the age of AI, announced this week that it raised a $10 million seed round led by Polychain Capital, with participation from Berggruen Institute and Anagram. The funding arrives alongside the launch of the company’s first product: a palm scan identity verification system that works on any smartphone and requires no external hardware.

For founder and CEO Zach Meltzer [pictured above], the idea grew out of years spent working in crypto infrastructure and digital identity systems. As AI-generated content began to improve – and fraud alongside it – he saw existing verification methods struggling to keep up.

“Privacy is a human right. But deepfakes and synthetic content present weaknesses that current systems simply can’t keep up with. VeryAI is restoring trust in identity verification by replacing outdated methods with solutions that are accurate, private and frictionless,” Meltzer said in a statement.

The rapid rise of generative AI has created new pressure on digital security systems across finance, social media, and online platforms. According to the company, the time it takes for hackers to compromise systems has risen by 22 percent since 2023, while breaches now occur in an average of just 48 minutes, leaving organizations with far less time to respond.

At the same time, common identity tools such as facial recognition and two-factor authentication are showing their limits. Faces can be scraped from social media and used to generate deepfakes, while text-message codes are often intercepted or bypassed.

VeryAI’s bet is that palms offer a stronger biometric signal. Unlike faces or fingerprints, palm prints are rarely exposed publicly and are not widely stored in existing databases.

While FaceID systems typically have a false acceptance rate of about one in one million, VeryAI’s palm verification reportedly achieves a rate of one in ten million for a single hand – and dramatically higher accuracy when both hands are used.

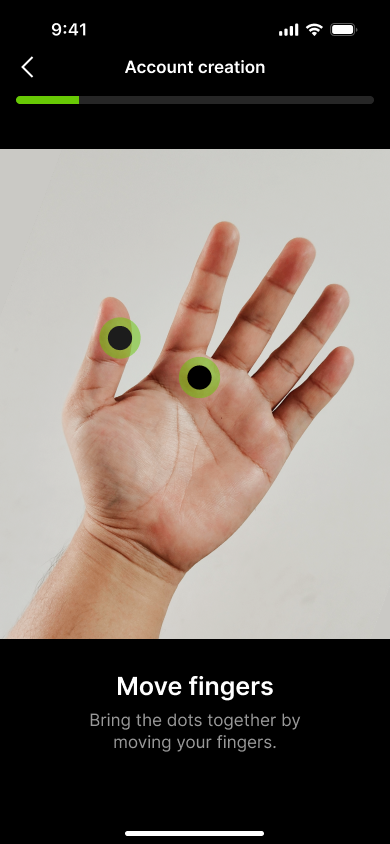

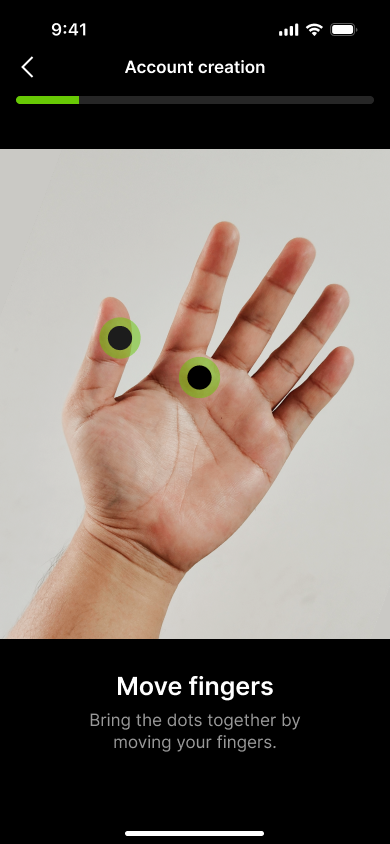

What makes the system unusual is that it doesn’t require special sensors. Users simply scan their palm with a smartphone camera.

With smartphone adoption in the United States now around 98 percent, the company believes identity verification could become as simple as holding up your hand to a phone.

Behind the technology is a team with deep roots in biometrics and emerging technology. Meltzer previously helped scale Galxe, a crypto loyalty platform that grew to more than 34 million users and over 6,000 partners. The company’s Chief Science Officer, Hua Yang, is a long-time researcher in palm biometrics with more than 50 academic publications and patents in the field.

Rather than storing biometric images, VeryAI says its system converts palm scans into mathematical signatures that cannot be reverse engineered into the original image.

For Meltzer, the goal is to balance security with privacy at a time when both are under pressure.

“Having helped build identity solutions for millions of crypto users, from KYC and reputation scores to ZK Protocols and credential systems, I’ve seen both their value and their limits in the face of AI-driven fraud. VeryAI is building the future of identity verification,” he said.

The startup plans to sell the system to businesses through a B2B model, allowing fintech companies, exchanges, government institutions, and social platforms to integrate palm verification into their existing systems.

Beyond identity checks, VeryAI is also exploring tools that verify human ownership of AI agents and systems that help distinguish authentic media from synthetic content. The company recently began a research partnership with Northwestern University, working with Professor Matthew Groh’s Human-AI Collaboration Lab to study how people detect deepfakes and how technology can help.

READ MORE IN REFRESH MIAMI:

Source link