I’ve written extensively of late on Nvidia’s AI roadmap for the rest of this decade as it transitions from its Blackwell AI CPU/GPU data center infrastructure to its multi-pronged, dense Vera Rubin, Feynman platforms and beyond.

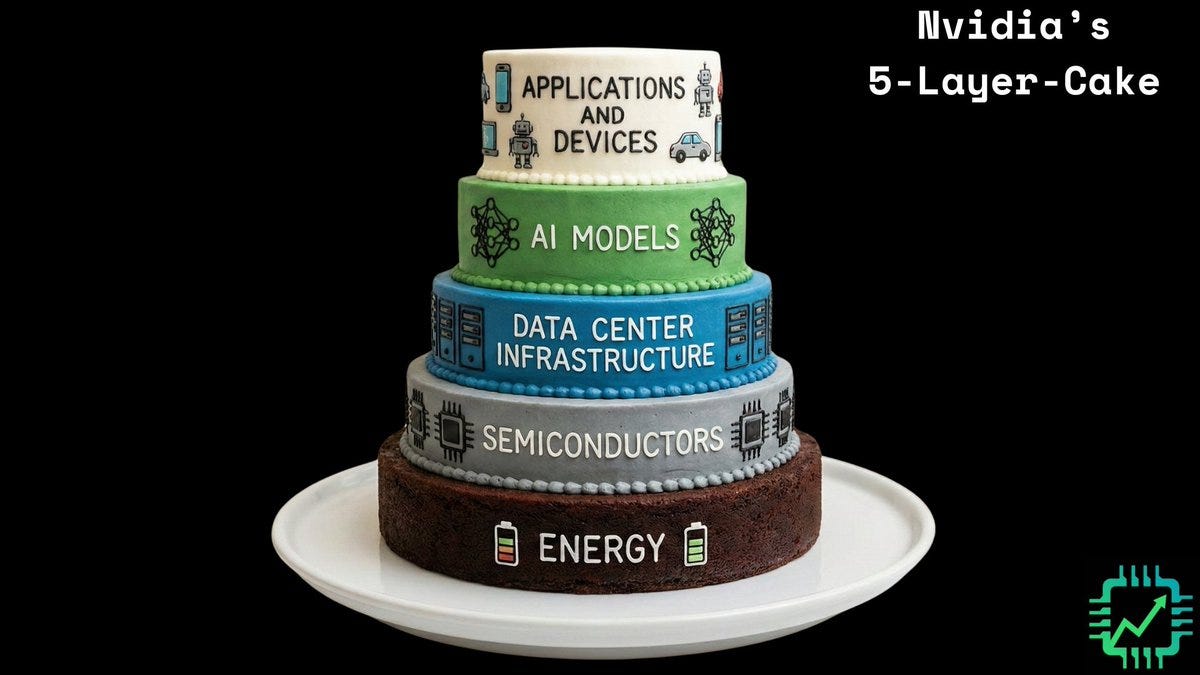

These CPU and GPU platforms, with a laser focus on start of the art AI training to inference and everything in between thus far in this AI Tech Wave. Around what Nvidia has described as the 5 layer AI cake.

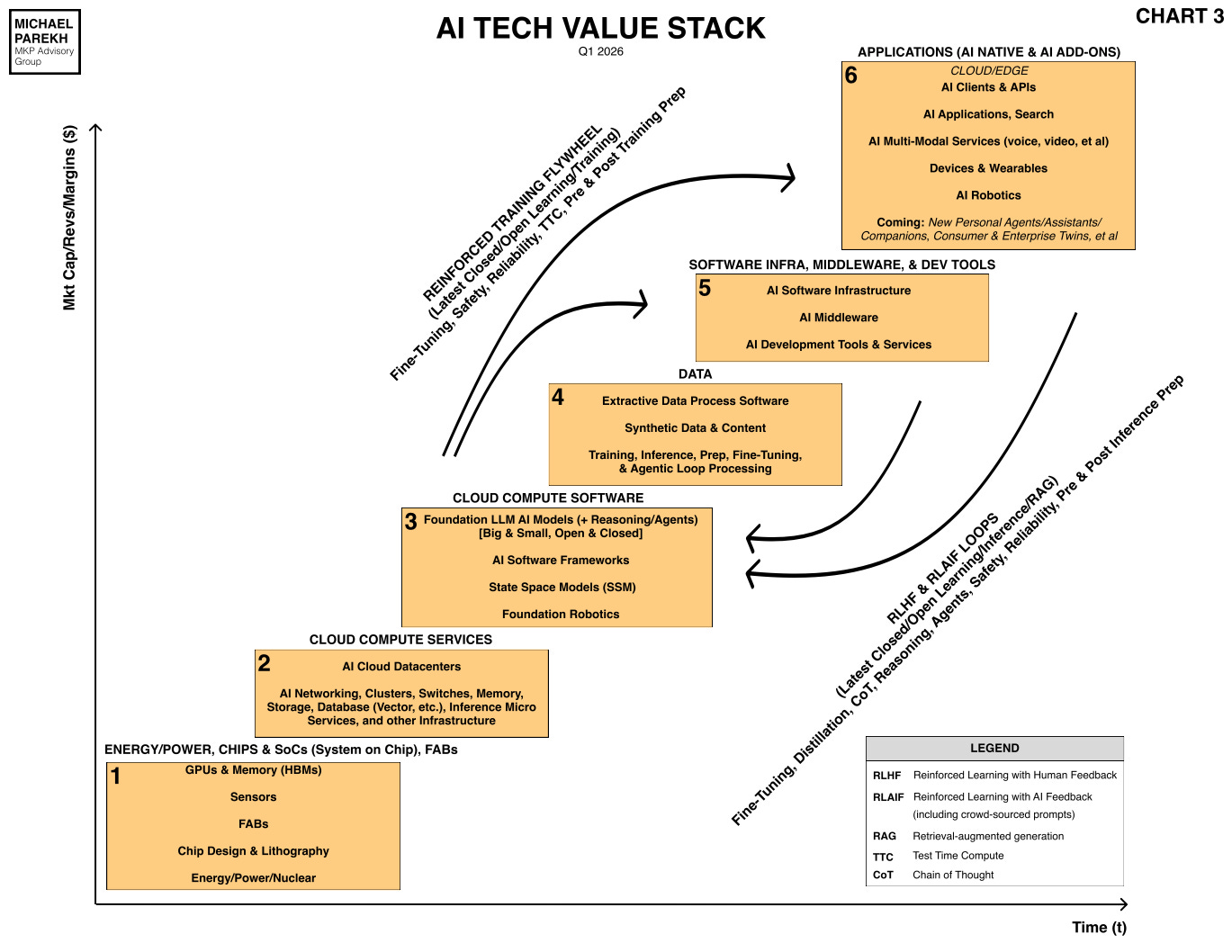

And given the company’s massive financial resources as the largest tech company in the world, neck and neck with Apple and others, Nvidia has ever growing flexibility in partnering, acquhiring, and generally playing ‘King-maker’ in tech stack boxes up and down the AI Tech Stack that I constantly use, illustrated below.

Complete with the all important Data layer box #4, and the AI training and inference reinforced learning loops covering the upper boxes. And the time and financial axis X and Y.

I’ve written about one such Nvidia ‘Kingmaker’ example in CoreWeave, the leading ‘Neocloud’ company, and an early Nvidia investment, and partnership, long before its IPO.

CoreWeave is currently building AI Data Centers for some of Nvidia’s biggest customers including Microsoft, OpenAI and others. Circular deals and beyond.

Obviously using and leveraging its access to copious amounts of Nvidia’s supply constrained latest and greatest chips and other infrastructure gear, as Semianalysis describes in detail. Especially now that it’s surpassing Apple as chip fab ‘Kingmaker’ Taiwan semiconductor (TSMC) this year.

The Wall Street Journal explores this Nvidia ‘Kingmaker’ role in “How Nvidia CEO’s Night at the Opera Showcases Role as AI Kingmaker”:

“Thanks to the astronomical demand for its chips prompted by the global AI boom, Nvidia generates more profit than almost any other public company on the planet. The chip giant has used its fast-growing war chest to become the industry’s most powerful financier, investing tens of billions of dollars in promising startups and supporting key customers who would otherwise struggle to afford its chips.”

“Nvidia says that the deals grow the broader AI ecosystem, and they indeed provide crucial financial backing for companies crushed by the high costs of building the technology. But they also have another effect: keeping customers hooked on Nvidia’s products and steering them away from rival chip providers.”

“Nvidia’s investments don’t come with explicit requirements to use the money for its technology. Yet some companies are so dependent on its financial support that they have essentially ruled out the possibility of using non-Nvidia chips, even if Nvidia doesn’t prohibit them from doing so.”

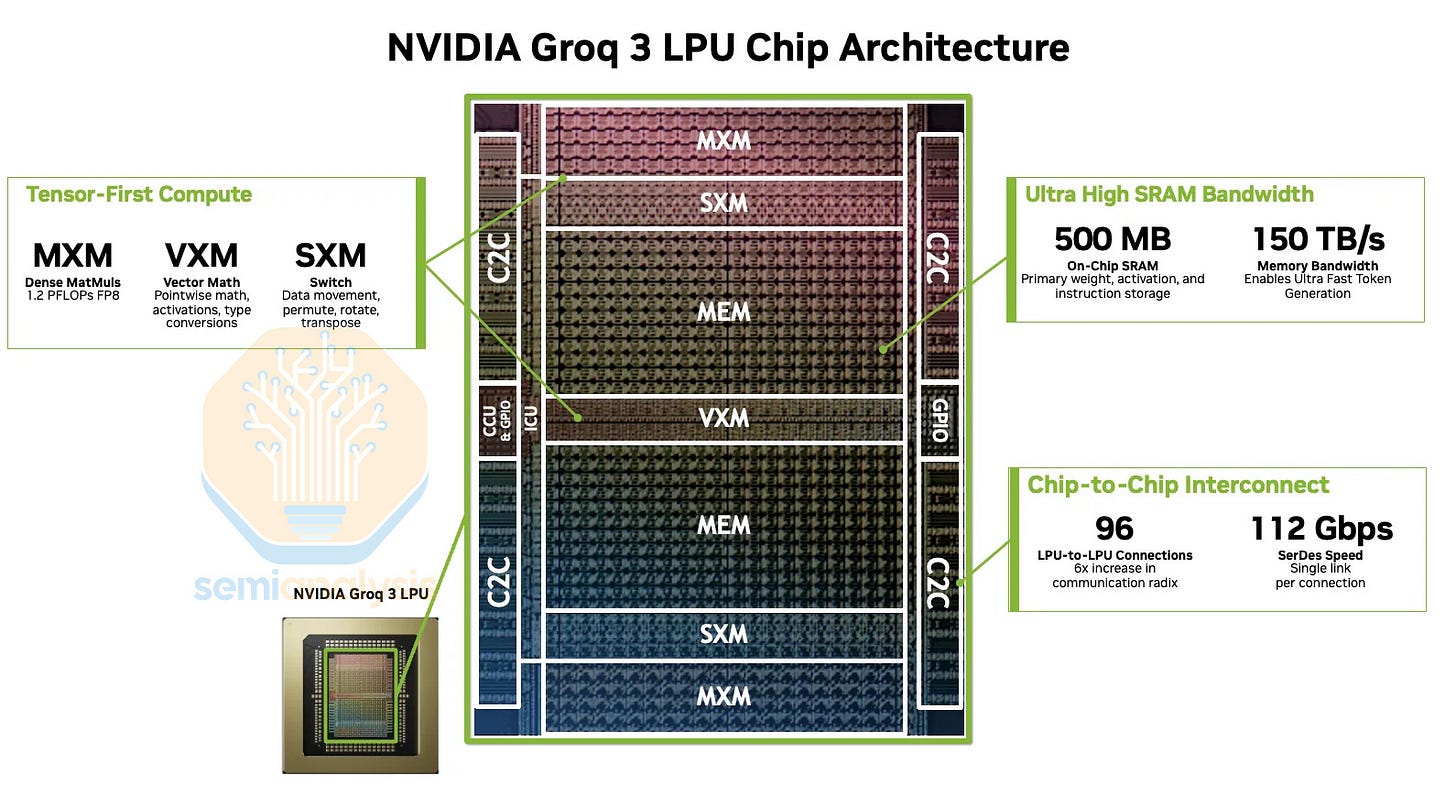

I’ve discussed Nvidia’s $20 billion acquihire of Inference startup Groq just four months ago, and how its already resulted in Nvidia’s new Inference Groq 3 LPU product line being rolled out with Samsung’s help later this year.

The WSJ goes on to describe how Nvidia is nurturing another relationship on the open source AI front with startup Reflection:

“Misha Laskin is a Russian-Israeli researcher who left Google to start Reflection, which is trying to build open-source AI models whose underlying code is freely available for anyone to use and modify. Last year, the startup began searching for a big backer to support its research agenda.”

“Sequoia Capital, a venture firm that displays a glass-encased Ferragamo black jacket of Huang’s at its Menlo Park headquarters, brokered a meeting between Laskin and Huang. At the time, Laskin knew that Huang was eager to support U.S. open-source models—and make sure they ran on Nvidia chips.”

“Nvidia had written in a recent earnings report that if open-source models used chips built by competitors, it could limit demand for its products and services. The company made open-source a huge focus of its developer conference this month, announcing a new coalition of startups, including Reflection, that would work together to build the technology.”

Nvidia leaned into the Reflection AI opportunity:

“Huang agreed to make Nvidia the financial anchor for a Reflection funding round, committing half of the $1 billion target. Then he encouraged other venture firms to invest as well. By the time Laskin arrived at the opera reception, he had raised $2 billion. Nvidia ended up investing roughly $800 million.”

“Much of that money will flow back to Nvidia, whose engineers are working with Reflection to build a giant cluster of GPUs that the startup will use to train its models. Nvidia is also introducing Laskin to potential customers, including top U.S. companies and representatives of foreign governments who are looking to build their own “sovereign AI” technology.”

“Laskin has also privately told investors that there could be a revenue sharing agreement between Reflection and Nvidia if their technology is integrated and sold together.”

“One large investor in Reflection called the company a “business arm” of Nvidia. At a recruiting event last year in London, one of Reflection’s executives put it more bluntly when speaking to a potential hire: “When you are talking to us, you are talking to Nvidia.”

The whole piece is worth a full read, especially with its informative charts and graphs. Would also recommend this Stratechery podcast interview with Jensen Huang as well. And this Lex Fridman podcast interview for those Nvidia/Jensen Huang information gluttons.

To summarize, Nvidia is focused on a whole host of other AI initiatives further up the tech stack, all the way to the application layer. This is where Nvidia’s deals with a whole host of companies are important to note to track. Companies like Thinking Machine, Perplexity, Reflection AI, and others.

The last one, described in detail above, is of note because it’s a recent US startup focusing on open source LLM AI opportunities. Now that Meta is focused on closed models, deviating from its earlier leadership with open source Llama models.

The broader point is like its peers and top hyperscaler big tech customers, Nvidia is taking an active ‘Kingmaker’ role across its moats this AI Tech Wave.

In investing, partnering, and where possible acquiring AI opportunities up and down the tech stack. And it has the relatively higher financial resources to do so relative to its top customers and tech peers.

And that’s a distinction that makes Nvidia the relative King in this IPO focused landscape. Stay tuned.

(NOTE: The discussions here are for information purposes only, and not meant as investment advice at any time. Thanks for joining us here)

Source link